|

Outline, We use a range of industry standard scanning techniques including hash matching technology and artificial intelligence to identify and remove CSAM that has been uploaded to our servers.īut Apple, it passes, is.

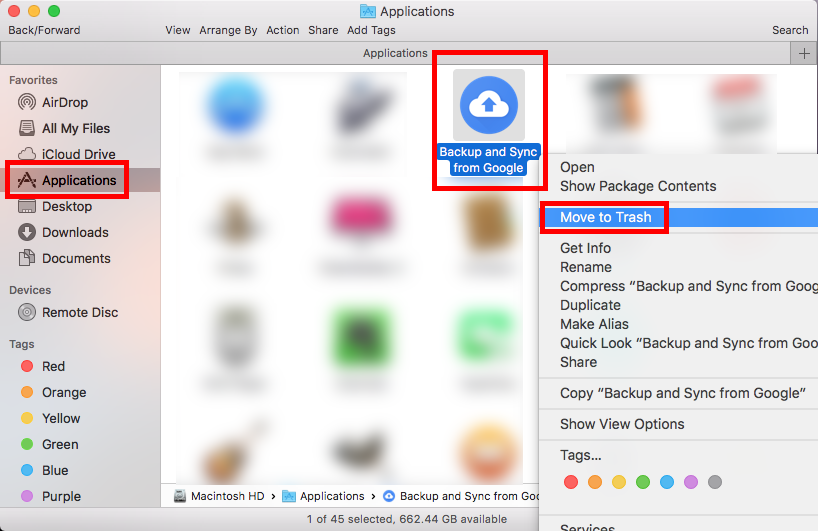

All major cloud platforms – Including Google Photos Google has been doing this for years, telling me, “Child sexual abuse content has no place on our platform.” “As we have done. By Zack Duffman.ĬSAM screening is not controversial. More from Forbes Apple Back Track iPhone Photo Scanning On-For Now. A week ago, the company strange ( Although inevitably ) Backtracked on its flawed plan to screen images of its users on their devices to eliminate child abuse images. It’s been a terrible week for Apple on the privacy front – iPhone makers don’t need the launch time of the iPhone 13 and iOS 15.  Millions of Apple users have Google Photos on their iPhones, iPads and Macs, but Apple has just set a serious warning about Google’s platform and told its users the reason for deleting their apps. When it comes to cloud photo storage, Google Photos carries over four trillion photos and videos to over a billion users.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed